Quantum computers are not simply faster classical computers. That distinction matters more than most headlines suggest. Quantum computing uses qubits that leverage superposition, entanglement, and interference to perform computations across multiple states simultaneously, something a classical bit, strictly 0 or 1, can never do. This guide cuts through the noise to give you a working understanding of quantum computing's principles, hardware realities, current limitations, and where the technology is genuinely headed.

Table of Contents

- Defining quantum computing: How does it differ from classical computing?

- Fundamental principles: Superposition, entanglement, and interference

- Core components: Qubits, quantum gates, and circuits

- Technologies in focus: Superconducting vs. trapped-ion vs. photonic qubits

- Current state: Achievements, limitations, and benchmarks

- What quantum computers can do (and what they can't)

- The debate: Scaling, error correction, and skepticism

- Future outlook: Quantum's path ahead and what to watch

- Stay ahead of the quantum curve

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Quantum is not just speed | Quantum computers use fundamentally new principles and are not simply 'faster classical computers.' |

| No technology winner yet | Superconducting, trapped-ion, and photonic qubits each have strengths and challenges. |

| Practical advantage is coming | Quantum systems must scale and correct errors to outperform classical machines in real applications. |

| Industry impact is selective | Sectors like chemistry, finance, and optimization stand to gain the most from quantum breakthroughs. |

| Debate and skepticism remain | Scaling and error correction challenges fuel ongoing debate among experts about the future of quantum computing. |

Defining quantum computing: How does it differ from classical computing?

Classical computers process information as bits, each one a definitive 0 or a 1. Every calculation is a sequence of these binary decisions. Quantum computers replace bits with qubits, and that swap changes everything about how computation works.

Qubits leverage superposition, entanglement, and interference to process information in ways that have no classical equivalent. Superposition lets a qubit exist as a blend of 0 and 1 simultaneously. Entanglement links qubits so that the state of one instantly informs the state of another, regardless of distance. Interference then steers the computation, amplifying paths that lead to correct answers and canceling paths that don't.

Think of it this way: a classical computer tries every door in a hallway one at a time. A quantum computer, through interference, essentially senses which door is correct before opening it. The result is not a blanket speed boost but an exponential advantage for specific problem types.

"Quantum computers are engines for specific exponential speedups, not universal replacements for classical machines. The value is in knowing which problems fit the quantum model." — Quantum computing research consensus

The key quantum principles of superposition, entanglement, and interference define what kinds of problems quantum hardware can attack. For everything else, your laptop still wins. Tracking these distinctions is essential for anyone following future tech trends in this space.

| Feature | Classical computing | Quantum computing |

|---|---|---|

| Basic unit | Bit (0 or 1) | Qubit (superposition of 0 and 1) |

| Processing | Sequential/parallel binary | Quantum parallelism via superposition |

| Connectivity | Independent bits | Entangled qubits |

| Best use cases | General-purpose tasks | Optimization, simulation, cryptography |

| Error handling | Mature, reliable | Active research area |

Fundamental principles: Superposition, entanglement, and interference

These three principles are not just physics vocabulary. They are the actual mechanisms that give quantum computers their edge, and misunderstanding any one of them leads to wildly inflated or deflated expectations.

Superposition, entanglement, and interference work together as a system. Superposition means a qubit holds a weighted combination of 0 and 1 until it is measured. Entanglement means two or more qubits share a correlated quantum state that cannot be described independently. Interference means quantum operations can be designed to amplify the probability of correct answers while suppressing wrong ones.

Here is a useful analogy for each:

- Superposition: A coin spinning in the air is neither heads nor tails until it lands. A qubit is like that coin, but you can influence the outcome before it lands.

- Entanglement: Two dice that always show matching numbers no matter how far apart they are rolled. Measuring one instantly tells you the other.

- Interference: Noise-canceling headphones use wave interference to eliminate unwanted sound. Quantum algorithms use the same principle to eliminate wrong computational paths.

Pro Tip: Most misunderstandings about quantum computing come from ignoring what happens at measurement. The moment you measure a qubit, superposition collapses to a definite 0 or 1. The quantum advantage happens before measurement, during the computation itself.

Core components: Qubits, quantum gates, and circuits

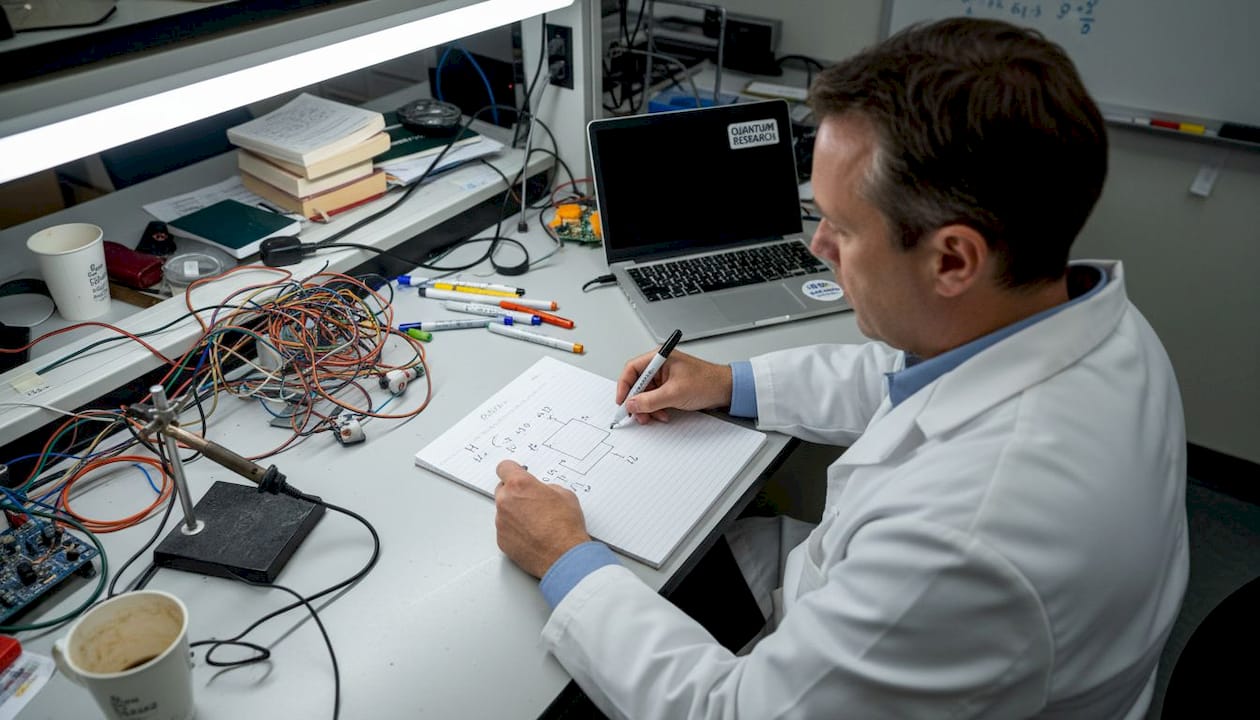

A working quantum computer is built from three layers: physical qubits, quantum gates that manipulate them, and circuits that chain those gates into algorithms.

Quantum gates and circuits manipulate qubits, and measurement collapses superposition into a classical output you can actually read. Gates are the quantum equivalent of logic gates in classical chips, but they operate on probability amplitudes rather than fixed binary values.

Here is the sequential flow of a quantum computation:

- Initialize qubits in a known state, typically all zeros.

- Apply quantum gates to create superposition and entanglement across qubits.

- Run the algorithm using gate sequences designed to amplify correct answers via interference.

- Measure the output, collapsing qubits to classical bits that encode the result.

- Repeat and sample because quantum measurement is probabilistic, multiple runs build a statistical picture of the answer.

Key gates include the Hadamard gate, which creates superposition from a definite state, the Pauli X, Y, and Z gates, which rotate qubit states, and the CNOT gate, which entangles two qubits. These are the building blocks of every quantum algorithm in use today.

Technologies in focus: Superconducting vs. trapped-ion vs. photonic qubits

Three hardware approaches dominate the quantum landscape right now, and each makes different tradeoffs between speed, fidelity, and scalability.

Superconducting qubits lead in scale and speed, with IBM and Google pushing systems to 433 and 1121 qubits respectively, gate speeds around 10 to 50 nanoseconds, and fidelity approaching 99.9%. Trapped-ion systems from IonQ and Quantinuum offer higher fidelity at 99.99% and all-to-all qubit connectivity, but operate more slowly. Photonic approaches use photons as qubits and can operate at room temperature, though scaling remains a significant challenge.

| Technology | Key players | Qubit count | Gate speed | Fidelity |

|---|---|---|---|---|

| Superconducting | IBM, Google | Up to 1121 | 10 to 50 ns | ~99.9% |

| Trapped-ion | IonQ, Quantinuum | Tens to hundreds | Microseconds | ~99.99% |

| Photonic | PsiQuantum, Xanadu | Early stage | Very fast | Moderate |

Pro Tip: No single technology has won the quantum hardware race. Hybrid architectures that combine superconducting speed with trapped-ion fidelity are actively being explored, and the winner in 2030 may not even be the leader today.

Current state: Achievements, limitations, and benchmarks

We are in what researchers call the NISQ era, short for Noisy Intermediate-Scale Quantum. These are systems with 50 to 1000 qubits that are real, operational, and impressive, but not yet competitive for most practical problems.

Google's Willow processor achieved a landmark result: below-threshold surface code error correction with a logical error rate of 0.143% at distance-7 and a scaling factor of 2.14. That is a genuine milestone in error correction research. IBM's processors have scaled to 433 and 1121 physical qubits. These are real engineering achievements.

But NISQ devices show no practical advantage over classical computers for real-world tasks. The core problem is error rates. Physical qubits are fragile, and noise from the environment causes decoherence, the loss of quantum state, within microseconds to milliseconds. Error correction helps, but it requires roughly 1000 physical qubits to protect a single logical qubit reliably.

Key limitations right now:

- High error rates that compound across long circuits

- Decoherence times too short for complex algorithms

- Cryogenic cooling requirements for superconducting systems

- Limited connectivity between qubits in most architectures

- No demonstrated quantum advantage on commercially relevant problems

Follow industry news on quantum to track which milestones actually signal progress versus which are benchmark theater.

What quantum computers can do (and what they can't)

Quantum computing's practical value is real but narrow. The technology excels where classical computers hit hard mathematical walls, not across the board.

Quantum's potential spans optimization, chemistry simulation, finance, and machine learning, though risks including decoherence and high error rates still limit near-term deployment. Here is a realistic breakdown:

- Optimization: Logistics, supply chain, and portfolio optimization involve combinatorial problems that scale exponentially for classical computers. Quantum algorithms like QAOA offer a potential path forward.

- Chemistry simulation: Modeling molecular interactions at the quantum level is naturally suited to quantum hardware. Drug discovery and materials science stand to benefit most.

- Finance: Risk modeling, options pricing, and fraud detection involve probability distributions that quantum amplitude estimation could accelerate.

- Machine learning: Quantum kernels and sampling methods may speed up specific ML tasks, though this remains largely theoretical.

Pro Tip: Quantum is not a universal speedup. If your problem does not involve exponential classical complexity, a classical computer will almost certainly outperform quantum hardware for the foreseeable future.

The debate: Scaling, error correction, and skepticism

Not everyone believes quantum computing will deliver on its promises. The debate between engineering optimists and physics skeptics is worth understanding before you make investment or strategic decisions.

"Noise fundamentally limits scalability. There is no evidence of natural quantum error correction, and hard physical limits may cap practical systems at 200 to 1000 qubits." — Gil Kalai, quantum skeptic

The optimist camp points to Google Willow's error correction results and IBM's roadmap as evidence that engineering can overcome noise. The skeptic camp argues that surface code error correction requiring roughly 1000 physical qubits per logical qubit, with real-time decoding latency around 63 microseconds, makes fault-tolerant systems economically and physically impractical.

Key arguments on both sides:

- Optimists: Error rates are improving exponentially with better fabrication and control; hybrid classical-quantum approaches can work around current limits.

- Skeptics: Environmental noise is a fundamental physical constraint, not just an engineering problem; no system has demonstrated sustained logical qubit operation at scale.

- Neutral ground: Both sides agree that near-term quantum advantage on real problems has not been demonstrated. The disagreement is about whether it ever will be.

For investors and industry planners, the honest answer is that quantum computing is a long-duration bet with genuine technical uncertainty, not a guaranteed transformation.

Future outlook: Quantum's path ahead and what to watch

The next decade will separate genuine quantum progress from sustained hype. Knowing what signals to watch makes all the difference.

Superconducting leads in scale and speed while trapped-ion leads in fidelity and connectivity, and no clear winner has emerged. That competitive tension is actually healthy for the field. Watch these indicators to evaluate real progress:

- Logical qubit demonstrations: Physical qubit counts are marketing. Logical qubit counts with sustained low error rates are the real metric.

- Algorithm benchmarks on real problems: Quantum advantage claims should be tested against the best classical algorithms, not outdated baselines.

- Error correction milestones: Progress toward fewer physical qubits per logical qubit signals genuine hardware improvement.

- Hybrid quantum-classical results: Near-term value will likely come from quantum processors accelerating specific subroutines within classical workflows.

- Commercial deployments: Pilot programs in pharma, finance, and logistics with measurable outcomes are stronger signals than lab demonstrations.

Stay connected to quantum trends to watch as the hardware and algorithm landscape shifts over the next few years.

Stay ahead of the quantum curve

Quantum computing is moving fast, and the gap between informed observers and those relying on headlines is widening every quarter. Whether you are tracking hardware milestones, evaluating investment theses, or planning technology strategy, having a reliable source of analysis matters.

2026new.com covers latest quantum insights alongside broader technology, finance, and science developments, giving you the context to separate real breakthroughs from noise. Bookmark the site, set up alerts, and make it part of your regular reading if you want to stay genuinely informed as quantum computing moves from research labs toward real-world deployment.

Frequently asked questions

How are qubits different from classical bits?

Qubits leverage superposition to represent both 0 and 1 simultaneously until measured, while classical bits are always one fixed value. That difference is what enables quantum parallelism.

What are the main challenges in building quantum computers?

Scaling qubit counts while controlling noise and decoherence is the central engineering challenge. Surface code error correction requires roughly 1000 physical qubits per logical qubit, and noise may impose fundamental limits that engineering alone cannot solve.

When will quantum computers outperform classical computers in practice?

NISQ devices show no practical advantage over classical systems for real-world tasks today. Most experts expect fault-tolerant systems capable of genuine advantage to be at least a decade away.

What industries could benefit most from quantum computing?

Optimization, chemistry simulation, finance, and machine learning are the sectors with the clearest near-term quantum use cases, assuming error rates continue to improve.

Is quantum computing hype or real progress?

Both. Google Willow's error correction milestone is genuine progress, but practical quantum advantage on commercially relevant problems has not been demonstrated yet.